00 · what this is

Central monitoring, built to actually work.

I help clinical teams operationalize centralized monitoring under E6(R3). KRI design, signal-to-action workflows, readiness audits. Hourly, senior, hands-on.

01 · the short version

I'm an independent consultant focused on central monitoring and monitoring oversight.

I work with sponsors and CROs to design KRI frameworks, close the signal-to-action gap, and stand up central monitoring programs that hold up to E6(R3) inspection.

The rest of this page is how I think about the problem, what I actually do inside an engagement, and how to get in touch.

02 · the gap I keep seeing

Central monitoring and monitoring oversight look at the same studies from two angles, and they're barely talking.

Central monitoring sees the KRI signals. Oversight sees the site behavior. But the handoff between them is thin. By the time a signal becomes site-level action, it has usually been translated, summarized, and half-understood by three different people.

The tools exist in pieces. The data exists. But there's no infrastructure connecting the signal to the action. That's the gap, and it's where most of the value is being lost.

Combining central monitoring and oversight has the potential to unlock a lot. Both in the speed of catching problems and in what actually happens once we catch them. Right now, the gap between signal and action is where most of the investment gets lost.

And the timing matters. E6(R3) is fully in force now across FDA, EMA, MHRA, and Health Canada. Centralized monitoring isn't optional anymore. It's written into the guidance as a standalone approach, and inspectors are treating it that way. How do sponsors detect risks in real time? How are oversight decisions documented? How do signals become action? That's the standard sponsors are being held to right now, and the infrastructure to answer those questions cleanly is often missing.

03 · what I help teams build

A working central monitoring system has four parts. Here's what each one looks like, and what I do inside of it.

I don't ship a SaaS product and charge a license. I work inside your stack, your KRIs, your escalation rules, and help you build something that fits your program. The four phases below are the shape of a full rollout. Most engagements start in one phase and expand from there.

Centralize the data and make it visible.

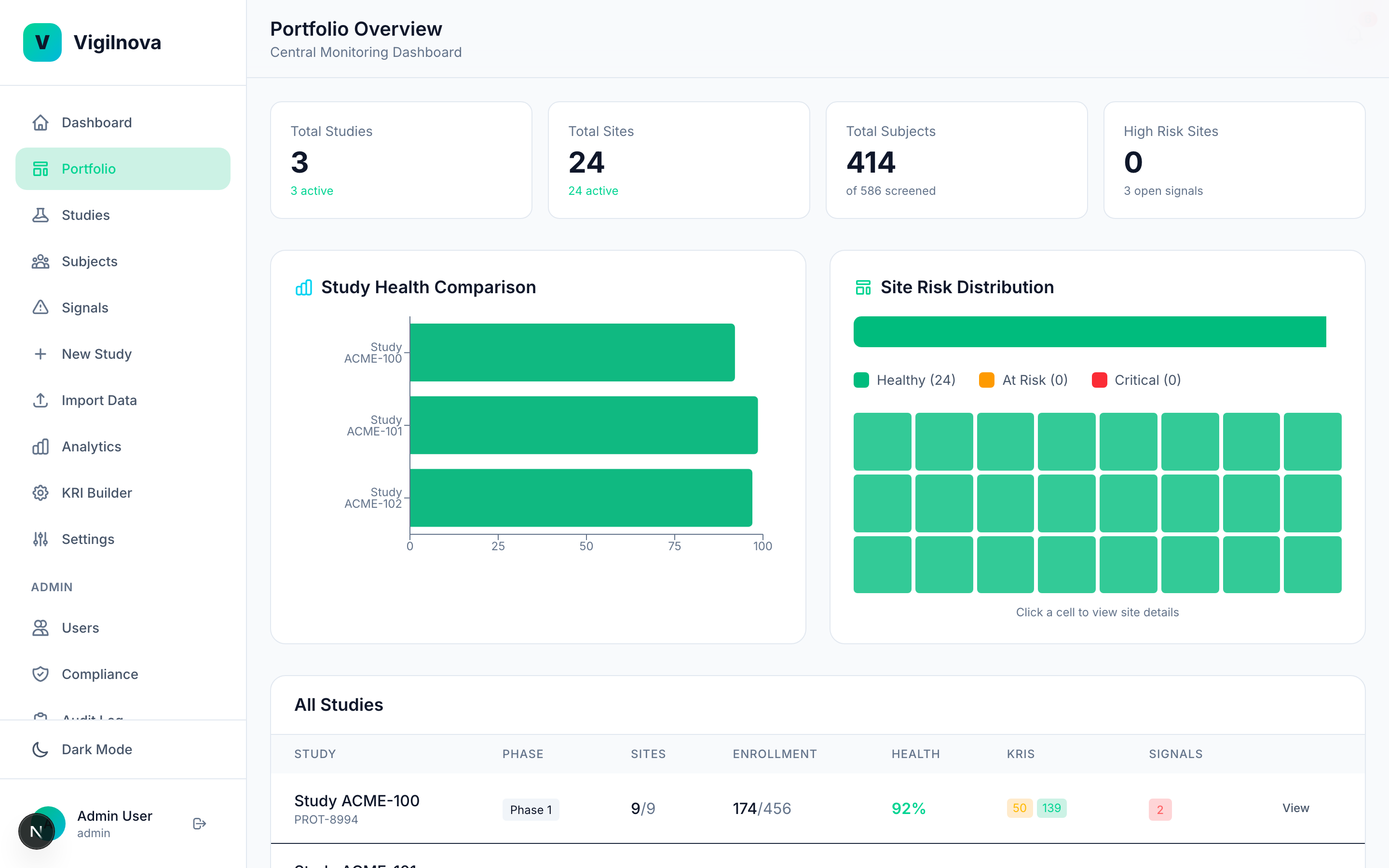

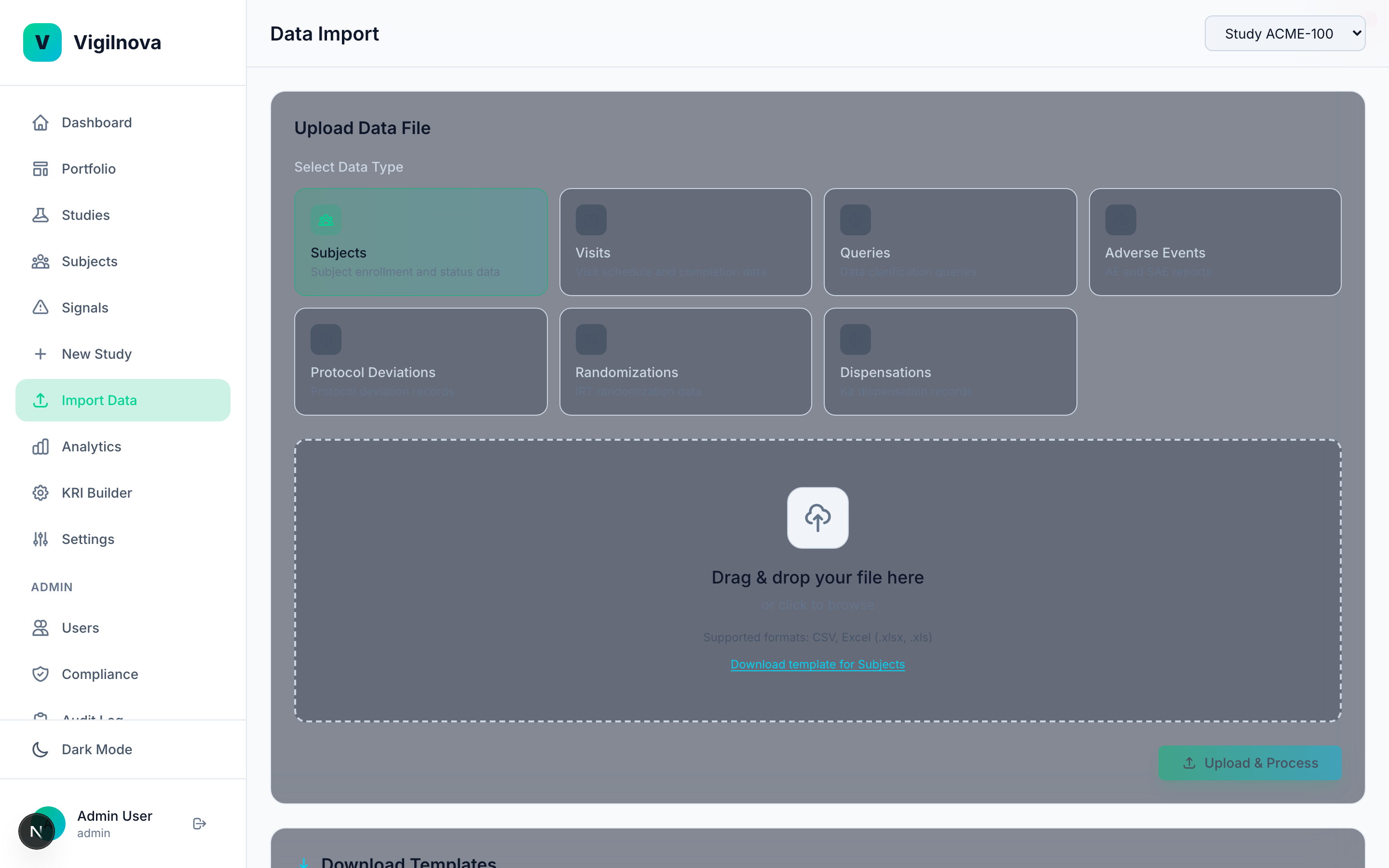

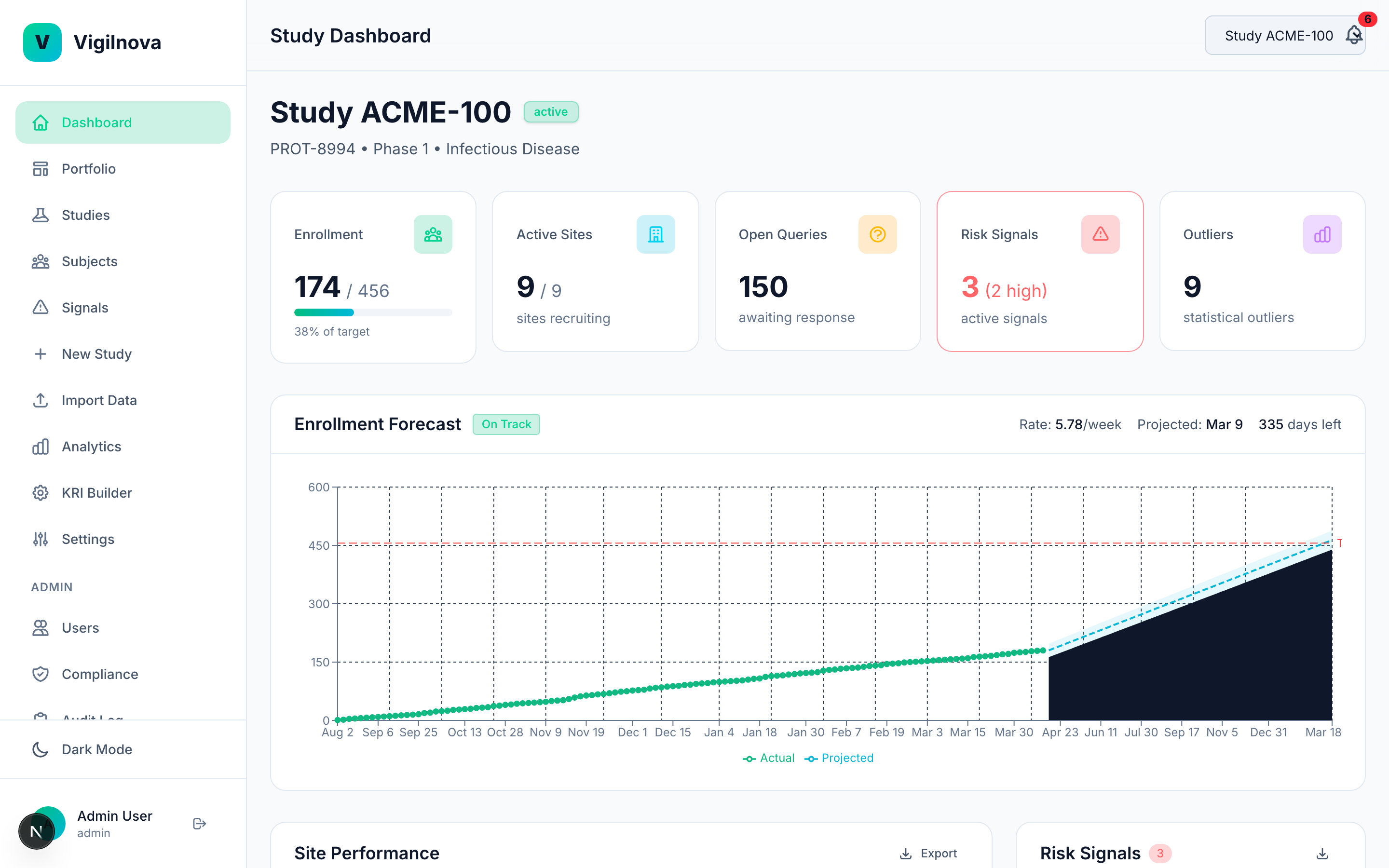

Pull KRI data from EDC and IRT into one place. One dashboard, all studies, updated in real time. I help you scope the data feeds, define the refresh cadence, and get the visibility layer right so the weekly review actually uses live data instead of a pre-meeting spreadsheet pull.

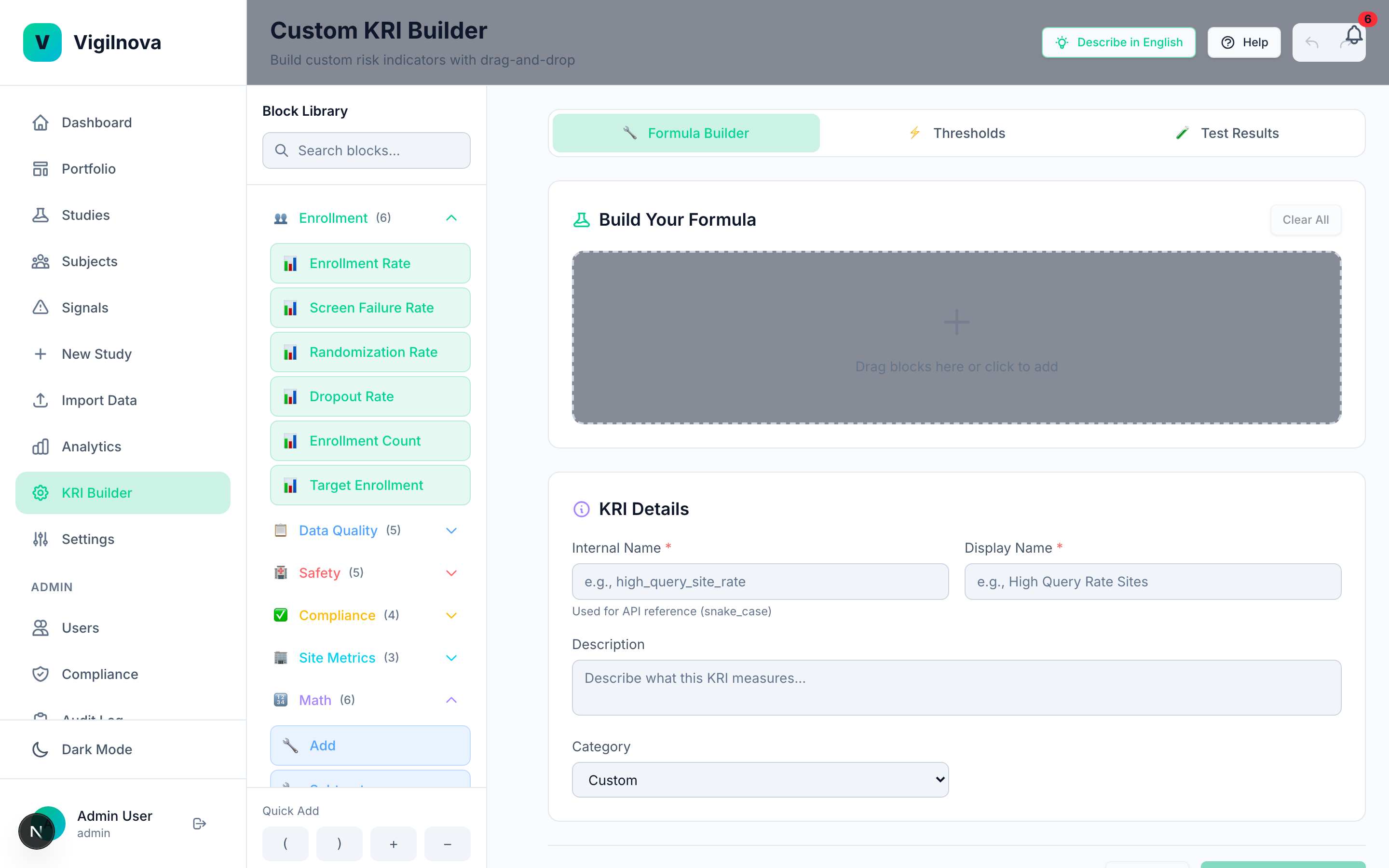

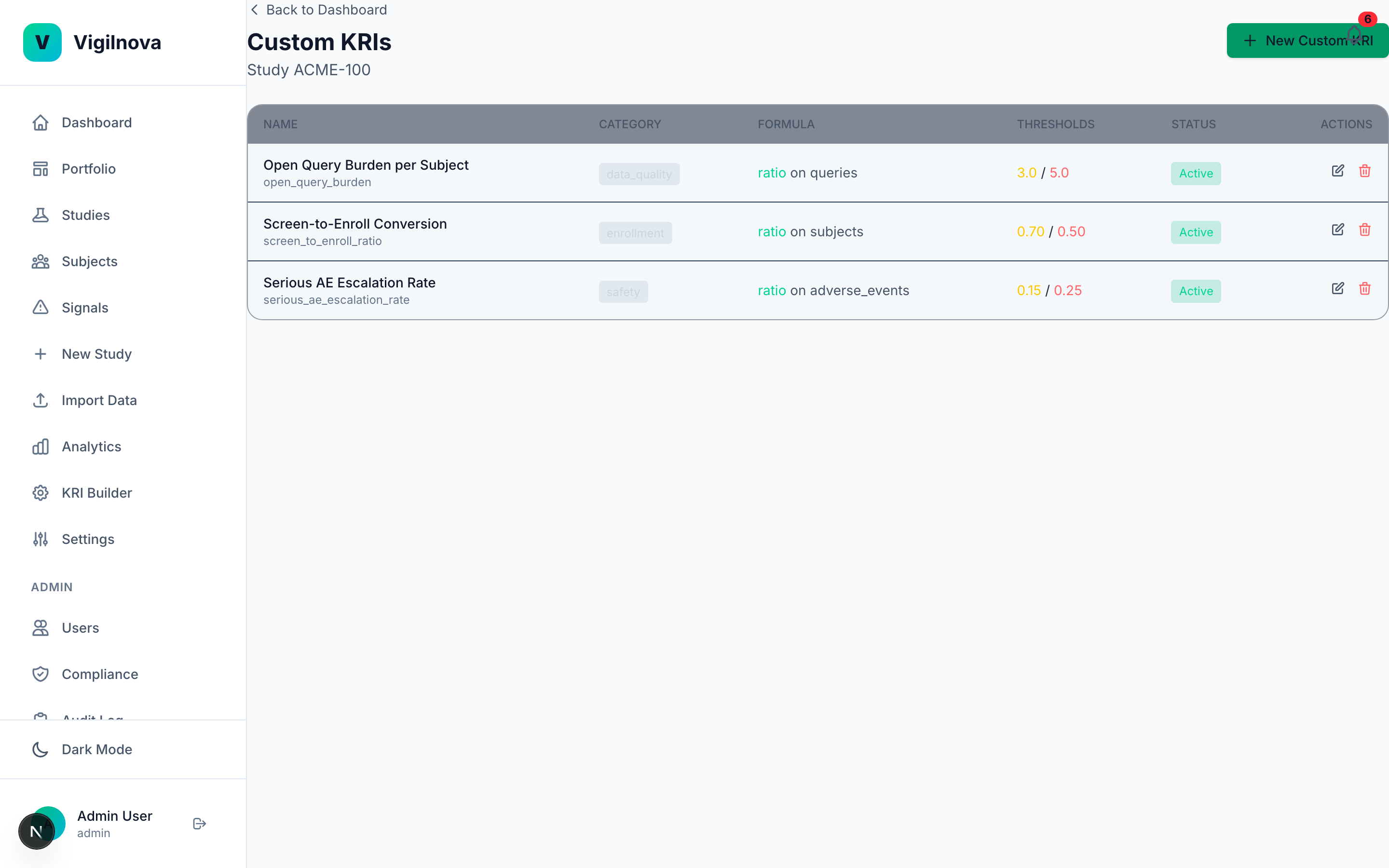

Build the signal engine.

Define KRIs with thresholds that are grounded in real data, not vendor defaults. Run automated statistical monitoring across every site, every week. When a site crosses a threshold, it fires a signal automatically. I help you write the KRI library, set and justify the thresholds, and design a review cadence that catches issues while they're still cheap to fix.

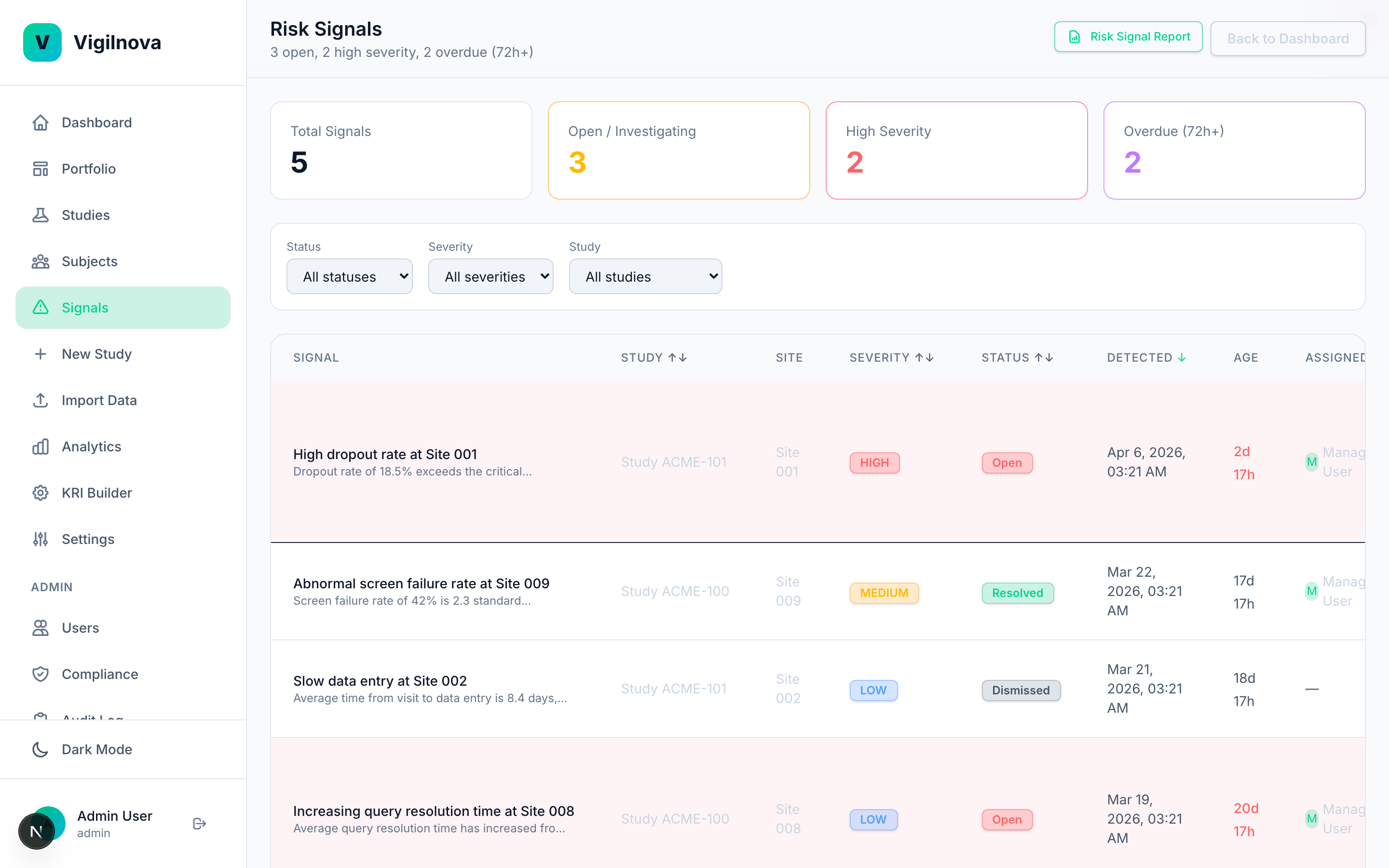

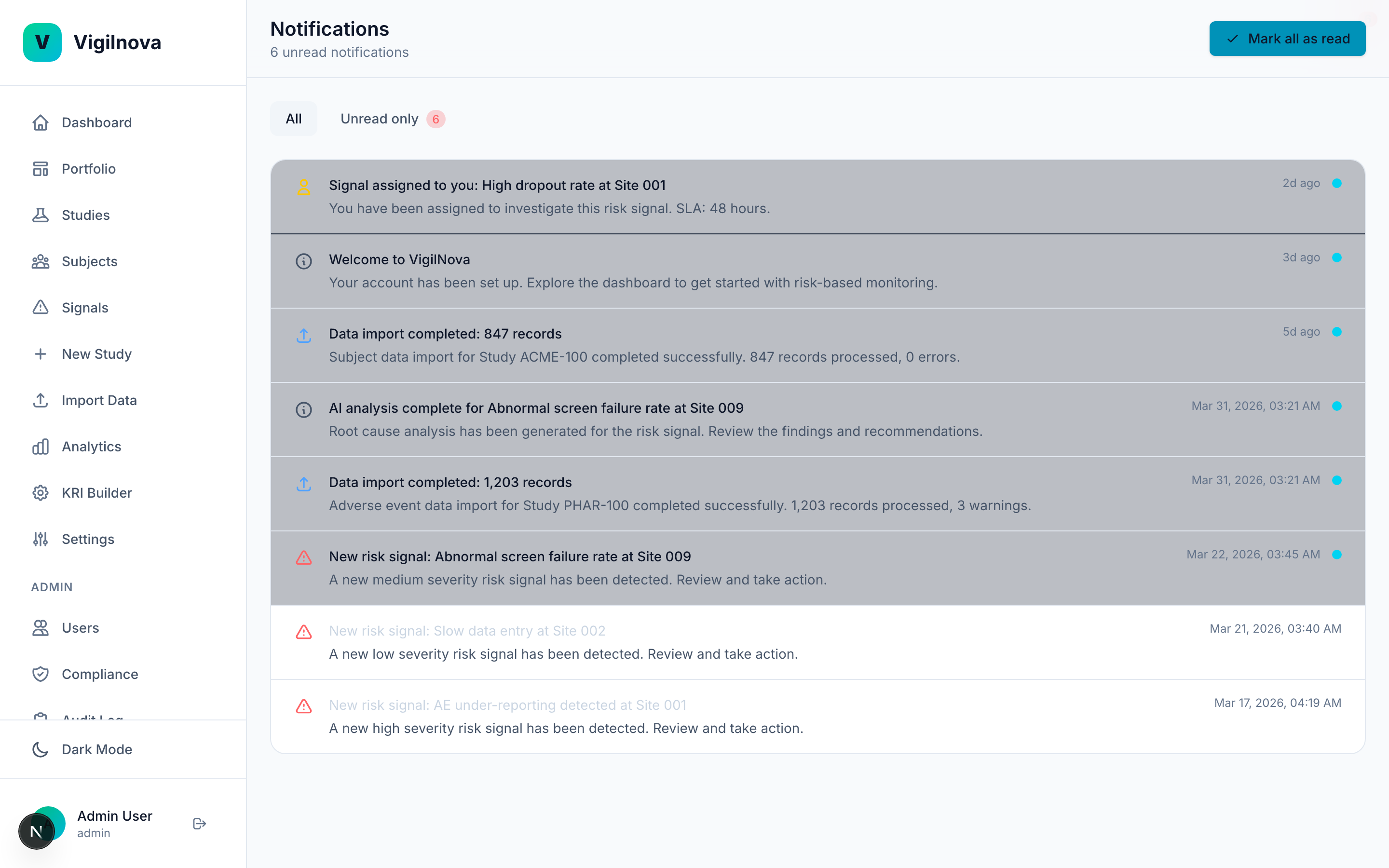

Close the loop between signal and action.

This is the part most programs are missing. When a signal fires, it needs to go somewhere. It gets assigned. Someone owns it. There's an SLA. Oversight sees it, acts on it, and resolves it. The whole thing is tracked and auditable. I help you design the assignment and escalation workflow, define ownership across central monitoring and site management, and document it in a way an inspector can follow.

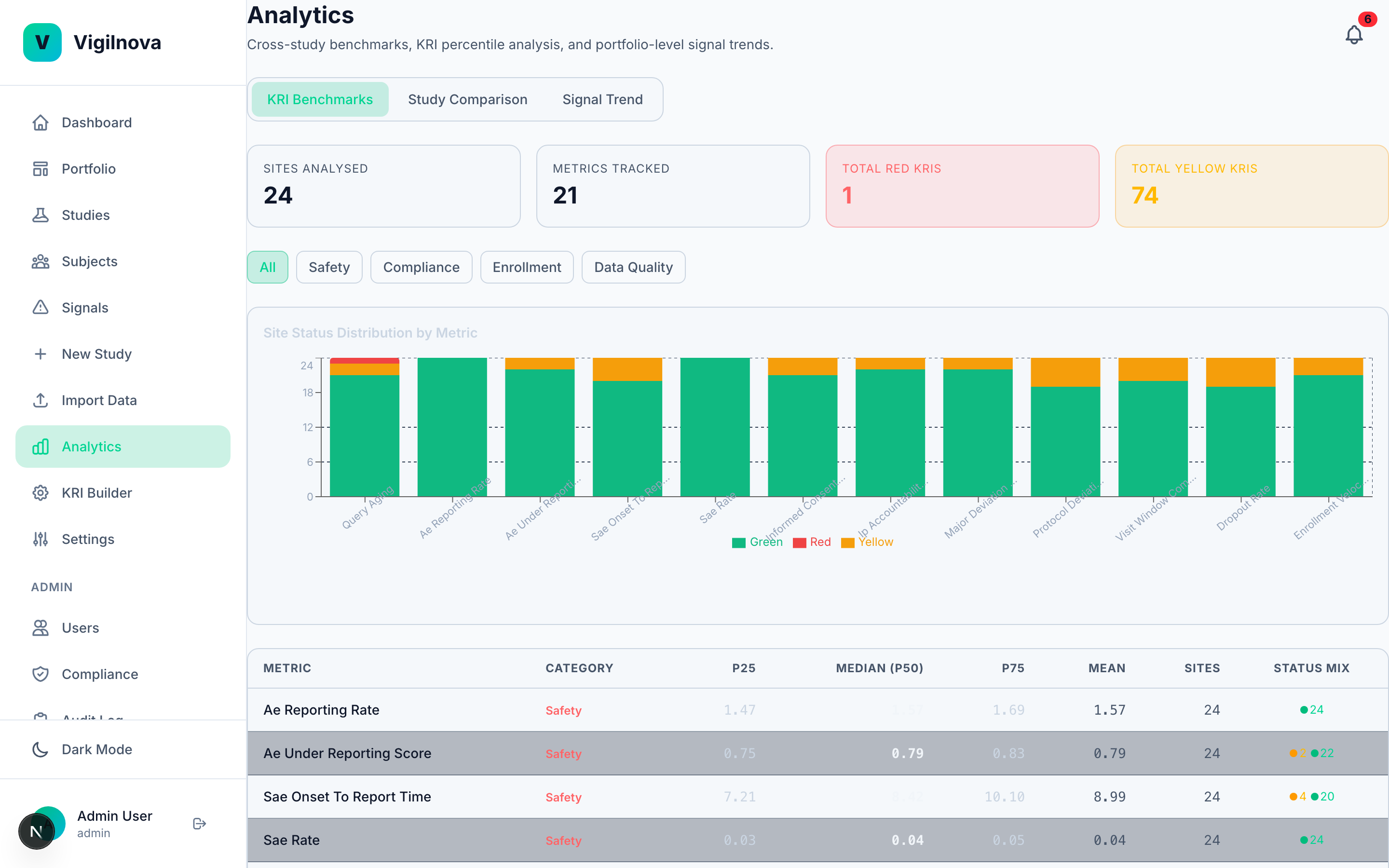

Add the intelligence layer.

Once the data flows and the signals close, you can start asking harder questions. Which sites will have problems next quarter? Why did this site's dropout rate spike? What does the enrollment curve actually predict? I help you separate the questions worth answering with real statistics from the ones being sold as AI but solved just as well with a simple control chart.

04 · how engagements work

Start with one study. Prove it works. Then bring the rest in.

The fastest way to roll out central monitoring isn't to boil the ocean. It's to pick one pilot study, ship the whole loop for that one, and let the results do the arguing for phase two. I've seen this approach de-risk both the technical and the political side of these programs.

That said, pilots like this fail in predictable ways. Here's how I keep them from drifting.

-

Parallel-run. Don't replace.

For the pilot, the new system runs alongside whatever you do today. Compare outputs weekly. This kills the "what if yours misses something ours catches" argument before it starts, and gives you a fallback if we hit a bug at month two.

-

Define success metrics on day one. With numbers.

Pilots die when "success" stays fuzzy. Concrete targets: signal detection latency cut from X days to Y. Monitor hours per week pulling data cut from X to Y. N site issues caught earlier than they'd have been caught otherwise. If we can't measure it, we can't sell phase two.

-

Timebox to 90 days with an explicit go / no-go.

Shorter than 90 days and we don't see enough signal. Longer and momentum dies, scope creeps, and it turns into a side project everyone forgets about. Set the decision date on day one. Calendar invite everyone. No surprises.

-

Pick the pilot study deliberately.

Not the cleanest (won't prove value). Not the messiest (won't finish). Pick something active, mid-enrollment, and representative of how you'd want the rest of the portfolio to run.

-

Plan study #2 on purpose, not by default.

Phase two isn't "scale up to ten studies." It's one more study, chosen to stress-test a dimension the pilot didn't. Different therapeutic area, different data source, different size. That's how we learn whether the system generalizes before you commit to it across the portfolio.

Engagements are hourly. I'm senior, I write my own code where code is part of the answer, and I don't mark up subcontractor time because there are no subcontractors. Rate and estimated scope go out after a first call.

05 · the proof I've done the work

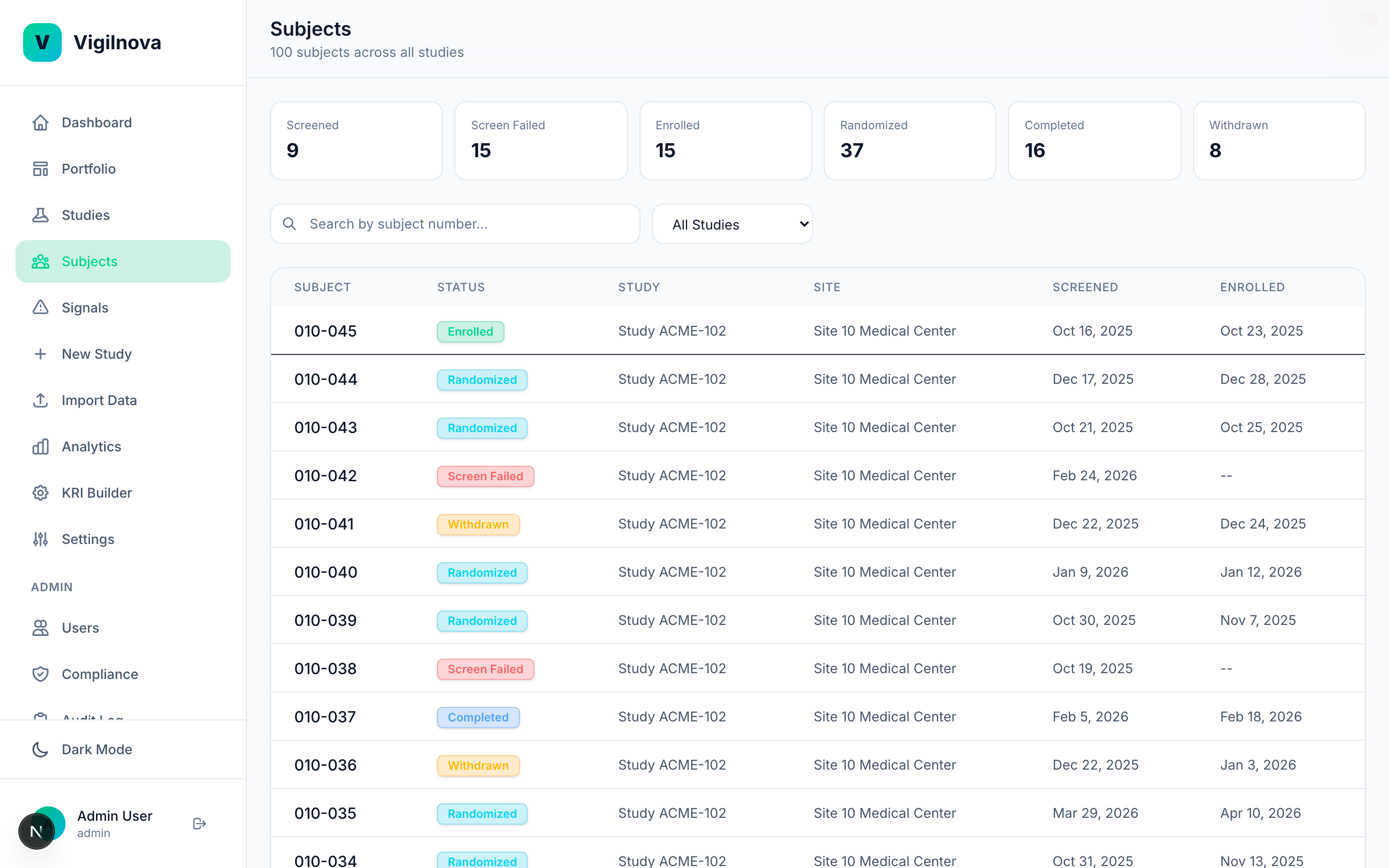

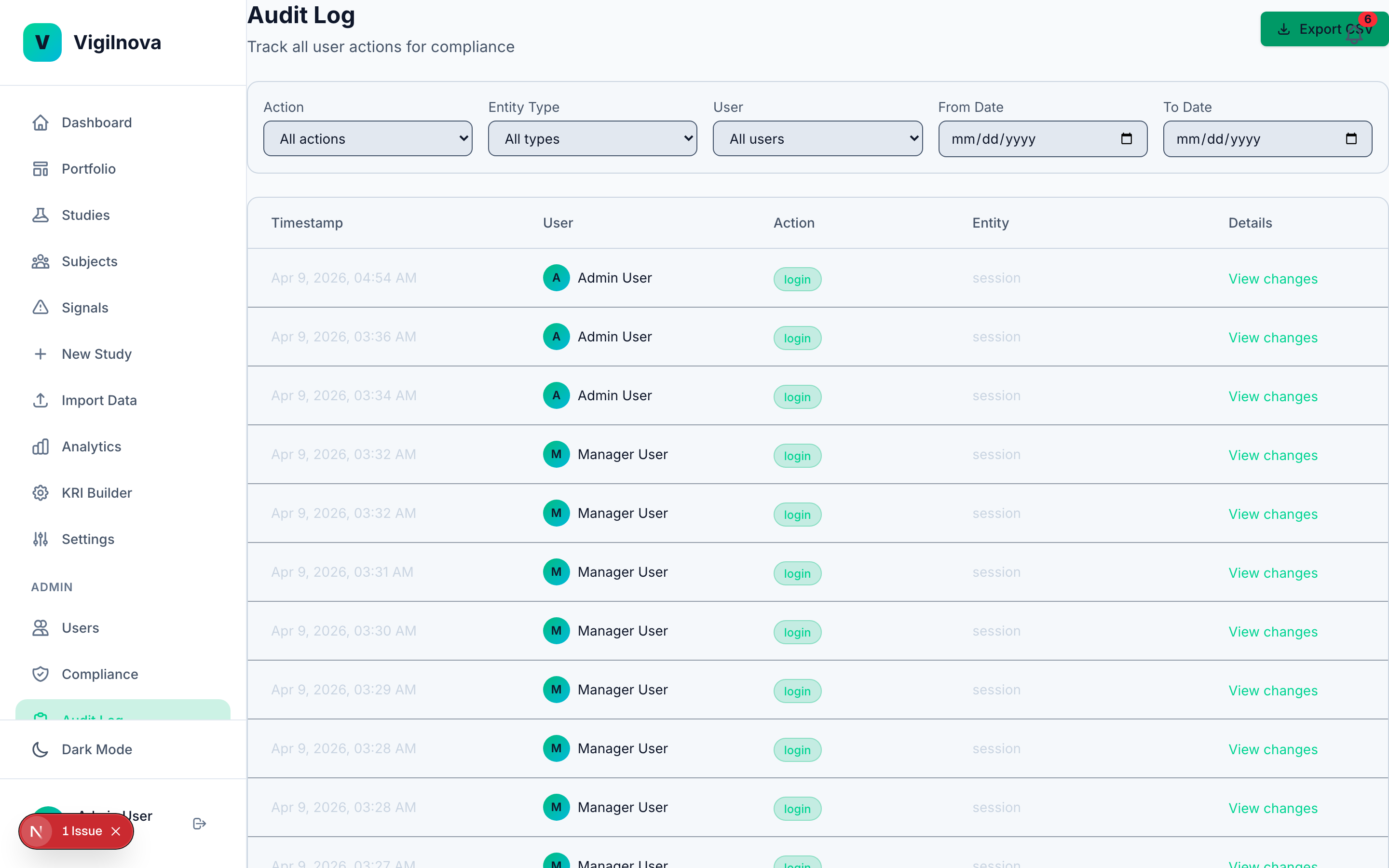

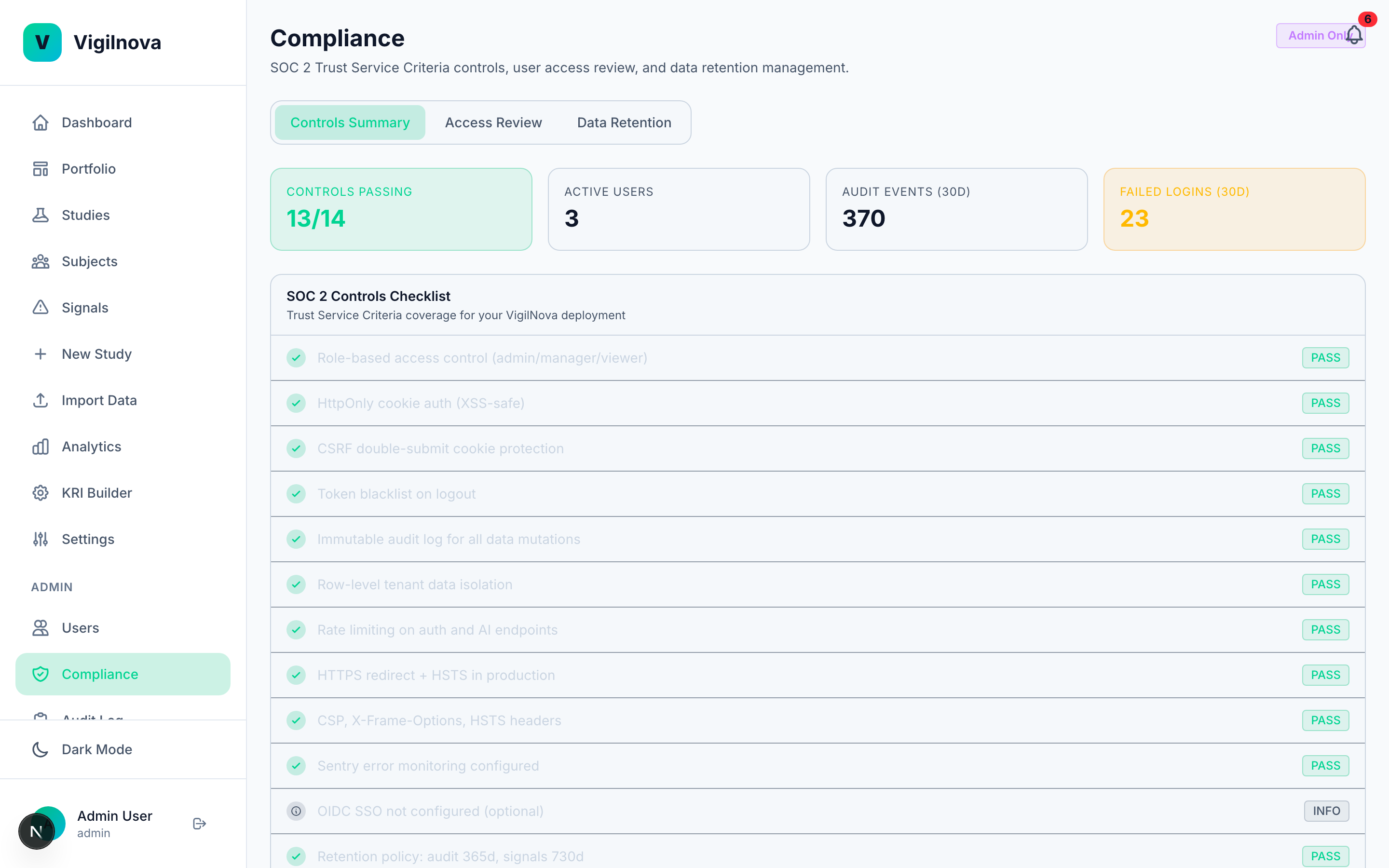

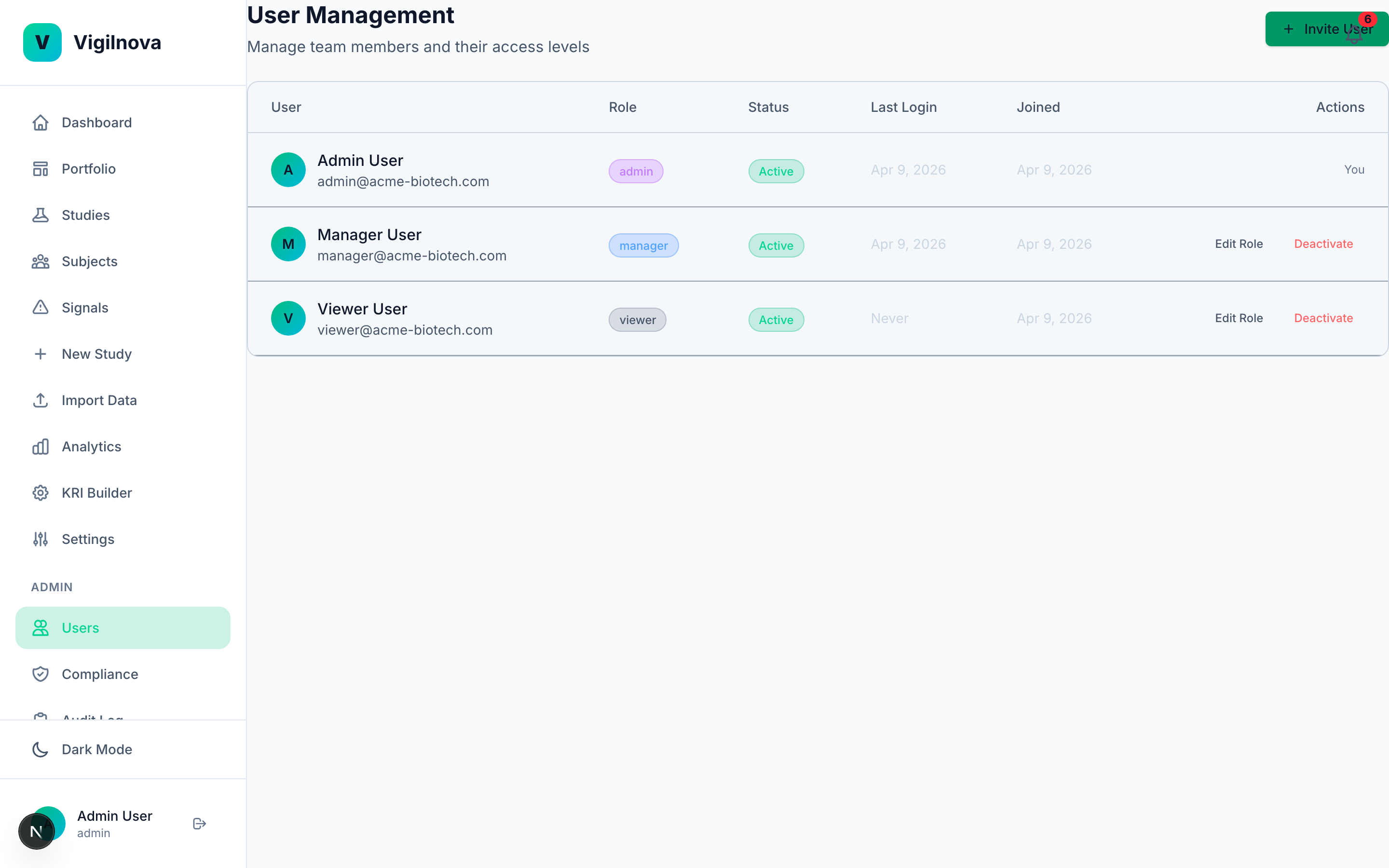

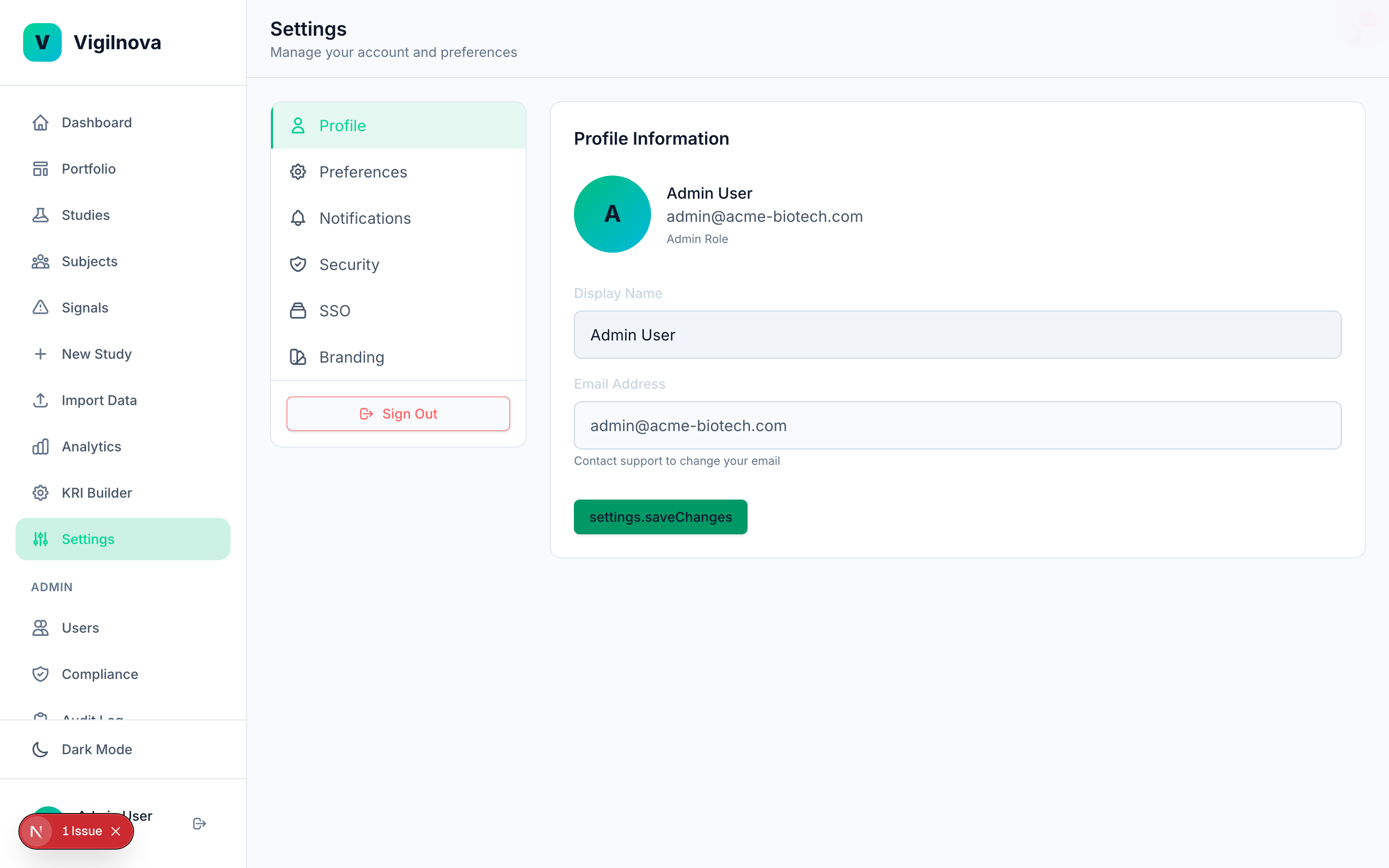

To stress-test my own thinking, I built a working central monitoring platform called VigilNova.

Not a deck. Not wireframes. A full-stack platform with a Postgres database, a FastAPI backend, a React frontend, seeded demo data, and a complete audit trail. It isn't for sale and isn't part of any engagement. It exists because I wanted to make sure the ideas I recommend hold up against a real end-to-end system. The screenshots on this page are from it.

Happy to walk through it live on a call if it's useful for grounding a scoping conversation in something concrete.

06 · where I fit

The engagements that tend to work best.

-

KRI library and threshold design.

You have a list of KRIs, but nobody can defend the thresholds. I build a defensible library: what you're tracking, why, where the lines are, and how they move as more data comes in. Delivered as documentation your QA group can sign off on.

-

Signal-to-action workflow buildout.

You have signals firing, but they disappear into email or a shared drive. I design the assignment, SLA, and escalation layer. Who owns what, how long they have, and what the audit trail looks like when an inspector asks.

-

E6(R3) readiness audit.

A short, focused assessment against the new guidance. What's documented, what isn't, where the gaps are, and what to prioritize before the next inspection or sponsor audit. Delivered as a written report with concrete recommendations, not a color-coded PowerPoint.

-

Fractional central monitoring lead.

Ongoing, part-time. I run or co-run your central monitoring function while your team matures the internal capability. Typical engagement is one to two days a week.

07 · get in touch

If you think there's a fit, send me a note.

Tell me a little about your study or your program, what's working, and what isn't. I'll come back with questions, a rough shape of what an engagement could look like, and a rate.

Shivam